In modern enterprise AI systems, success is no longer defined only by how well a model is trained but by how efficiently it performs in production environments. This is where AI Inference Strategy becomes a defining factor for scalability, speed, and real-world impact. A strong AI Inference Strategy ensures that machine learning models deliver consistent, low-latency predictions across business operations while maintaining cost efficiency and security. Organizations that fail to design a structured AI Inference Strategy often struggle with performance bottlenecks and unpredictable AI outcomes, which directly affects business value creation.

A well-planned AI Inference Strategy helps enterprises move beyond experimentation and into production-grade intelligence systems that support decision-making, automation, and customer experience enhancement. As AI adoption increases across industries, the importance of a robust AI Inference Strategy continues to grow, especially in environments where real-time predictions are critical.

The Strategic Role of AI Inference in Enterprise Intelligence

The foundation of enterprise AI success lies in how effectively models are deployed for inference. An effective AI Inference Strategy ensures that trained models are not just accurate but also responsive and scalable in real-world scenarios. In enterprise environments, AI Inference Strategy plays a central role in powering applications such as fraud detection, recommendation systems, predictive analytics, and automated workflows.

A strong AI Inference Strategy also bridges the gap between data science teams and IT operations. It ensures that models transition smoothly from development to production without performance degradation. Without a structured AI Inference Strategy, organizations risk inconsistent outputs and inefficient resource utilization.

Enterprises increasingly view AI Inference Strategy as a long-term architectural decision rather than a short-term deployment choice. This shift reflects the growing importance of operational AI maturity in competitive markets.

Why AI Inference Strategy Determines AI Success

The success of any AI initiative heavily depends on the chosen AI Inference Strategy. While model training focuses on accuracy, inference determines usability. A poorly designed AI Inference Strategy can lead to delays, high operational costs, and inconsistent performance.

Organizations that prioritize AI Inference Strategy early in their AI lifecycle are better positioned to scale solutions efficiently. A structured AI Inference Strategy ensures that models can handle real-time traffic spikes and maintain performance stability under varying workloads.

Moreover, AI Inference Strategy influences how quickly businesses can adapt to changing market conditions. Faster inference leads to faster decision-making, which directly impacts competitiveness. This makes AI Inference Strategy a critical pillar of digital transformation initiatives.

Cloud vs On-Prem Tradeoffs in AI Inference Strategy

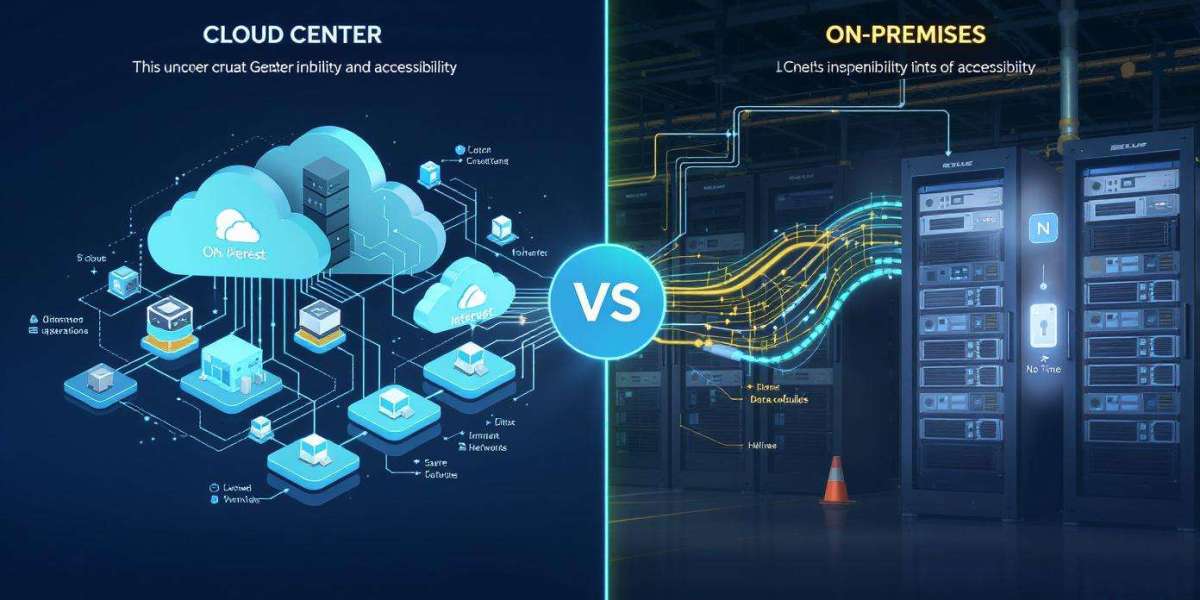

One of the most important decisions in designing an AI Inference Strategy is choosing between cloud and on-prem infrastructure. Cloud-based AI Inference Strategy offers flexibility, scalability, and rapid deployment capabilities. It allows businesses to dynamically adjust resources based on demand, making it suitable for unpredictable workloads.

On the other hand, an on-prem AI Inference Strategy provides greater control over data, security, and latency. Industries with strict compliance requirements often prefer on-prem systems as part of their AI Inference Strategy to ensure full data governance.

Many enterprises are now adopting hybrid models that combine both approaches. A hybrid AI Inference Strategy allows organizations to balance performance, cost, and security by distributing workloads intelligently across environments.

Performance Optimization and Latency Control

Performance is a critical component of any AI Inference Strategy. Low latency is essential for applications such as real-time recommendations, autonomous systems, and financial trading platforms. A well-optimized AI Inference Strategy ensures that inference requests are processed quickly without compromising accuracy.

Cloud environments may introduce latency due to network overhead, while on-prem systems often deliver faster response times. This tradeoff is a key consideration when designing an AI Inference Strategy for latency-sensitive applications.

Optimization techniques such as model compression, caching, and edge deployment are often integrated into a modern AI Inference Strategy to enhance performance efficiency.

Cost Efficiency and Infrastructure Planning

Cost management is another crucial aspect of AI Inference Strategy. Cloud-based solutions follow a usage-based pricing model, which can be cost-effective for variable workloads. However, continuous high-volume usage may increase long-term expenses.

On-prem infrastructure requires upfront investment but can reduce operational costs over time. A well-balanced AI Inference Strategy evaluates both capital expenditure and operational expenditure to determine the most efficient approach.

Organizations that align their AI Inference Strategy with workload patterns achieve better cost predictability and resource utilization.

Security and Compliance Alignment

Security considerations significantly influence AI Inference Strategy decisions. Enterprises handling sensitive data must ensure that their AI Inference Strategy complies with regulatory frameworks and internal governance policies.

On-prem deployments often provide higher control over data security, while cloud providers offer advanced security frameworks and encryption standards. A strong AI Inference Strategy incorporates security at every stage of the inference pipeline.

Compliance requirements such as data residency laws and industry regulations must be embedded into the AI Inference Strategy to avoid legal and operational risks.

Scaling AI Systems with the Right AI Inference Strategy

Scalability is one of the most important benefits of a well-designed AI Inference Strategy. Cloud environments enable rapid scaling, allowing systems to handle sudden increases in traffic. On-prem systems require hardware upgrades, which can slow down scaling processes.

A scalable AI Inference Strategy ensures that AI systems can grow alongside business demands without performance degradation. This is especially important for enterprises expanding into new markets or launching data-intensive applications.

Dynamic scaling capabilities built into the AI Inference Strategy help maintain system reliability under heavy workloads.

Real World Enterprise Applications

Across industries, AI Inference Strategy is driving innovation in multiple domains. In retail, it powers personalized shopping experiences. In healthcare, it supports diagnostic assistance systems. In finance, it enables fraud detection and risk assessment models.

Each use case requires a tailored AI Inference Strategy that aligns with latency, cost, and compliance requirements. The flexibility of AI Inference Strategy allows organizations to optimize AI performance based on industry-specific needs.

As enterprises continue to expand AI adoption, AI Inference Strategy becomes a foundational element of digital infrastructure planning.

Common Mistakes in AI Inference Strategy

Many organizations underestimate the complexity of AI Inference Strategy and focus only on model training. One common mistake is ignoring latency optimization, which leads to slow system responses.

Another frequent issue is poor infrastructure alignment. Without a well-defined AI Inference Strategy, businesses often experience scalability challenges and unexpected cost overruns.

Lack of monitoring and performance tracking also weakens AI Inference Strategy effectiveness, leading to undetected inefficiencies in production systems.

Important Information

A successful AI deployment depends on continuous evaluation and refinement of the AI Inference Strategy. Organizations must regularly assess model performance, infrastructure utilization, and cost efficiency to maintain optimal results. Emerging technologies such as edge computing and distributed AI frameworks are further expanding the possibilities of modern AI Inference Strategy design.

Enterprises that treat AI Inference Strategy as a dynamic, evolving framework rather than a static implementation are better equipped to handle future AI demands and technological shifts.

At BusinessInfoPro, we equip entrepreneurs, small business owners, and professionals with practical insights, proven strategies, and essential tools to drive growth. By breaking down complex concepts in business, marketing, and operations, we transform challenges into clear opportunities, helping you confidently navigate today’s fast-paced market. Your success is at the heart of what we do because as you thrive, so do we.